Unveiling the Underlying Currents of China's AI at the Yunqi Conference

At the 2024 Yunqi Conference, Alibaba CEO Wu Yongming discussed the rapid rise of AI and the potential of generative AI to reshape the digital and physical worlds.

At the 2024 Yunqi Conference, Alibaba CEO and Alibaba Cloud Chairman & CEO, Wu Yongming, shared positive views on the future of AI, making a rare public appearance.

He highlighted that the past 22 months saw unprecedented AI development, but "we are still in the early stages of the AGI revolution."

Looking ahead, he emphasized that the biggest potential of generative AI is "not just creating a few super apps for mobile screens, but transforming the digital world and reshaping the physical world."

It's been 22 months since OpenAI launched ChatGPT. In this period, the global AI community and investment circle have gone through a full cycle—from intense hype to a slowdown in innovation, followed by rising doubts.

However, with OpenAI's release of the o1 model, AI is now penetrating various industries, and market confidence is recovering.

Wu's speech comes at a critical moment, making it even more relevant.

How should we view this AI revolution? What will AGI's final form be? How can we harness this technological revolution?

Wu’s insights and this year's Yunqi Conference have become the best answers to these questions.

The main forum turned into a stage for AI entrepreneurs. Alibaba Cloud invited top model developers, autonomous driving experts, and humanoid robotics scientists.

At the venue, Alibaba Cloud showcased nearly every AI startup and product from China, with a 40,000 square meter exhibition area packed with over 100 global AI applications.

Of the 400+ topics and forums, 150+ were focused on large models.

The humanoid robot, which gained attention at the World Robot Conference, was once again a star at Yunqi.

In last year’s conference, the focus was on AI’s upstream—computing power and ecosystems. This year, Yunqi became a snapshot of China’s AI industry.

AI is moving beyond servers and into every sector.

The Yunqi Conference, which began in 2009, has witnessed every wave of China’s tech development and provides clues to understanding the present.

The Quiet Evolution of a New Industrial Revolution

After ChatGPT's rise, defining the scale of this wave has become a hot topic for top minds globally.

The internet is a common comparison.

Marc Andreessen, founder of A16Z, once suggested that while the internet connects computers, AI, especially large models, is like a new type of computer.

Wu Yongming offered his perspective on this widely discussed CEO question.

He noted that in the past 30 years, the essence of the internet revolution was connection—connecting people, information, businesses, and services to enhance collaboration and create immense value, ultimately changing how we live.

However generative AI increases intelligence in productivity, creating greater intrinsic value.

“This value could be 10 or even 100 times more than what the mobile internet achieved,” Wu predicted. As the leader of China’s largest cloud provider, he witnesses firsthand the undercurrents of the Chinese AI market.

As he mentioned at Yunqi: “Today, nearly all our clients and CTOs are using AI to rebuild their products. A surge of new demand is driven by GPU computing power, and many existing applications are being reprogrammed with GPUs. AI computing is accelerating in industries like automotive, biomedicine, industrial simulation, weather forecasting, education, enterprise software, mobile apps, and games.”

Traditional AI focuses on simulating human perception, while generative AI elevates machine intelligence to thinking, reasoning, and creativity.

Wu elaborated in his speech: “Generative AI gives the world a unified language—tokens. These can represent text, code, images, videos, or sound. AI models can tokenize data from the physical world to understand everything from human movement to writing, composing, painting, and programming. After understanding, AI can mimic humans to perform tasks in the physical world, sparking a new industrial revolution.”

The internet changed how humans “connect” with others, information, businesses, and services. However large models are transforming both the front-end interaction and the back-end production processes.

Many quietly starting industrial revolutions go unnoticed by the public.

Take DeepModeling as an example.

As a leader in “AI for Science,” the company uses AI and multi-scale simulation algorithms to solve key scientific problems in fields like biomedicine, energy, and material sciences, developing the next-gen micro-scale design and simulation platform.

DeepModeling’s Uni-Mol large model can generate molecules and predict properties, with applications in drug discovery, enabling molecules with high binding affinity to be directly generated in protein cavities.

With Alibaba Cloud’s high-performance computing, DeepModeling has increased the maximum amino acid sequence length for single predictions to 6.6k, covering 99.992% of known protein sequences.

Meanwhile, Topstar, an industrial robotics company from Guangdong, integrated Alibaba Cloud’s large models to enhance robot control, enabling robots to complete complex tasks like palletizing, painting, and assembly through simple voice commands. Generative AI is gradually unfolding its potential.

Soon, most physical objects will be AI-powered, creating the next generation of products and forming a synergistic connection with cloud-driven AI.

Opportunities of Generative AI in Transforming the Physical World

In 2008, mobile internet was born, and by 2012, it gained widespread industry recognition—a journey that took five years.

The rise of generative AI with ChatGPT, however, reached global consensus almost immediately, with tech giants around the world investing heavily without hesitation.

One key reason is that while mobile internet connects the physical world, generative AI can actually transform it.

At the Yunqi Conference, Wu Yongming emphasized, "We shouldn't just look at the future through the lens of mobile internet. The real potential of generative AI isn’t about creating a few super apps for phone screens, but taking over the digital world and transforming the physical one."

The auto industry is already undergoing such a shift.

Earlier autonomous driving systems relied on manually written code—hundreds of thousands of lines—yet still couldn't cover all driving scenarios.

Xpeng's Chairman and CEO, He Xiaopeng, mentioned that autonomous driving research began in 1925, but after nearly a century, it still only worked in limited scenarios.

"No single person can create rules for every driving situation, even in this specialized task," He explained.

With end-to-end large model training, AI can now learn from massive amounts of human driving data, giving cars driving abilities beyond most human drivers.

He said, "In the next 36 months, even the most average users will be able to drive in any city like a seasoned driver."

Wang Xinxing, CEO of Unitree Robotics, shared a similar experience. He was once opposed to creating humanoid robots, but large models opened new possibilities.

Previously, enabling robots to perform human-like complex tasks seemed unsolvable, as human actions are infinite.

Even something as simple as pouring a cup of tea involves hundreds of fine motions. Earlier, these actions had to be manually programmed by experts. Large models now provide robots with the ability to form a "world model" that understands the essence of tasks and how to perform them.

Humanoid robots offer two major advantages. First, today's world is optimized around human behavior, making humanoid robots best suited to navigate it. Second, general-purpose robots enjoy significant economies of scale.

Only through mass production can cost advantages be realized, making robots truly accessible. Wu Yongming pointed out, "Robotics will be the next industry to undergo a massive transformation. In the future, all movable objects could become intelligent robots—whether it's a robotic arm in a factory, a crane on a construction site, a worker in a warehouse, a firefighter at an emergency scene, or even a pet dog in the home."

In Wu's vision of the future, factories will be filled with robots, directed by AI large models, to produce more robots.

Today, every household has a car; in the future, they might have two or three robots.

AI-powered digital systems connected to AI-enabled physical systems will greatly boost productivity and revolutionize the efficiency of the physical world.

The speed at which this happens depends on the evolution of AI infrastructure.

We Are Still in the Early Stages of AI Infrastructure

Throughout history, the rise of new applications has always relied on infrastructure improvements.

The proliferation of 4G, 5G, and smartphones led to the explosive growth of short videos.

Infrastructure like highways and railways paved the way for China’s leading e-commerce and logistics industries.

Take internet infrastructure as an example.

As of June this year, China has 67.12 million kilometers of fiber optic cables, 1.17 billion internet access ports, and 11.88 million mobile phone base stations, including 3.917 million 5G base stations.

The prosperity of AGI applications will similarly depend on a unified and robust computing infrastructure. From the 2012 AlexNet model to 2017's AlphaGo Zero, computing power consumption increased by 300,000 times.

ChatGPT emerged from the backing of tens of thousands of A100 chips on Microsoft Azure, costing hundreds of millions of dollars.

According to an OpenAI report, since 2012, the computing power required for AI training has doubled every 3-4 months. By 2018, it had grown about 300,000 times, whereas Moore's Law had only seen a sevenfold increase in the same period.

As AI moves to transform the physical world, the cost of computing power for training and inference will skyrocket, making cloud computing and AIGC a natural pairing.

Cloud computing’s centralized power, flexible deployment, pay-as-you-go model, and lower costs make it almost the only solution in the face of expensive and scarce computing power.

Whether it's training or inference, large models cannot do without the cloud.

At Yunqi, Wu mentioned that over 50% of the new demand for computing power is driven by AI, and AI computing has become mainstream.

This trend will continue to expand.

Over the past year, Alibaba Cloud has invested heavily in AI computing, but demand still far exceeds supply.

The traditional CPU-dominated computing system is rapidly shifting to a GPU-dominated one, with AI computing accelerating into every industry.

Currently, leading model training requires 4-5 times more computation each year, and China’s AI computing scale is expected to grow at a compound annual rate of 33.9% from 2022 to 2027. Model parameters are growing 10-fold, and datasets are growing 50-fold, placing higher demands on storage.

To improve efficiency in autonomous driving model training, Xpeng Motors partnered with Alibaba Cloud in 2022 to build China's largest AI computing center in Ulanqab, increasing training efficiency by over 600 times.

Due to rapid advancements in large models, Alibaba Cloud has expanded this center’s computing power by more than four times to 2.51 Eflops, providing Xpeng with a stable and efficient computing base to support rapid model iterations and deliver smooth driving experiences nationwide.

At this year’s Yunqi Conference, the "world's first AI car," the Xpeng P7+, was also unveiled.

This car is equipped with a leading, large, end-to-end model. Over the past two years, Xpeng and Alibaba Cloud have quadrupled their AI computing capacity.

He Xiaopeng announced plans to deepen collaboration with Alibaba Cloud, pushing the boundaries of autonomous driving with end-to-end large models. Wu Yongming is clearly aware of this trend.

Since declaring “AI-driven, public cloud-first,” Alibaba Cloud has aggressively invested in AI infrastructure.

At this year’s Yunqi Conference, Wu reaffirmed, “Alibaba Cloud is investing in AI technology development and infrastructure at an unprecedented scale. We are expanding our network clusters to tens of thousands of GPUs, redesigning AI infrastructure from chips, servers, and networks to cooling, power supply, and data centers.”

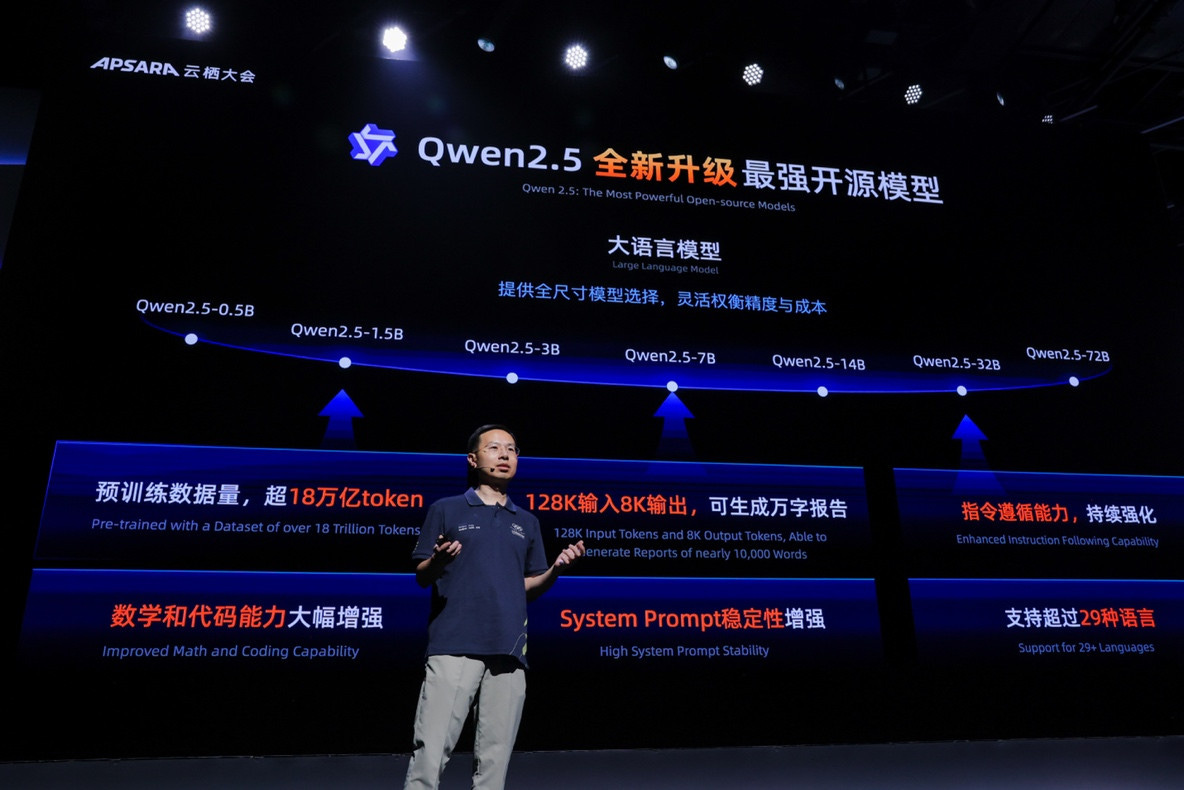

Alibaba Cloud also showcased upgrades across its AI product family.

The newly launched Pangu AI server supports 16 GPUs per machine, with 1.5TB of memory, and can predict GPU failures with 92% accuracy. Alibaba Cloud ACS introduced GPU container computing power, improving performance through topology-aware scheduling. The AI-optimized high-performance network architecture, HPN 7.0, can stably connect more than 100,000 GPUs, improving model training performance by over 10%. Alibaba Cloud’s CPFS file storage provides 20TB/s data throughput, offering massive storage for AI computing. The PAI AI platform now supports flexible scheduling for large-scale training and inference, with over 90% effective AI computing utilization.

At the same time, Alibaba Cloud announced significant price cuts for its Tongyi Qianwen models, with reductions of up to 85%, bringing token prices to as low as 0.3 yuan per million tokens.

Over the past six months, Alibaba Cloud’s Bailian platform has continued to lower the barriers for large model access, promoting broader adoption of AI.

There is a clear relationship between model costs and social innovation. As AI infrastructure costs drop, innovation will surge on the other end.